EKF SLAM on Turtlebot3

ME 495: Sensing, Navigation, and Machine Learning for Robotics | Michael Jenz | Winter 2026

Skills: ROS 2, C++, Extended Kalman Filter, LiDAR Processing, SLAM, Sensor Fusion

Summary

This project is the culmination of ME 495: Sensing, Navigation, and Machine Learning for Robotics. It implements Simultaneous Localization and Mapping (SLAM) using an Extended Kalman Filter (EKF) on a Turtlebot3, validated in both simulation and on hardware.

The system detects cylindrical obstacles from raw 2D LiDAR point clouds, associates them with a growing landmark map, and continuously corrects the robot's estimated pose. In simulation, the SLAM estimate converged to within 1 mm of ground truth. On hardware, it outperformed pure odometry by a factor of three in final position accuracy.

Results

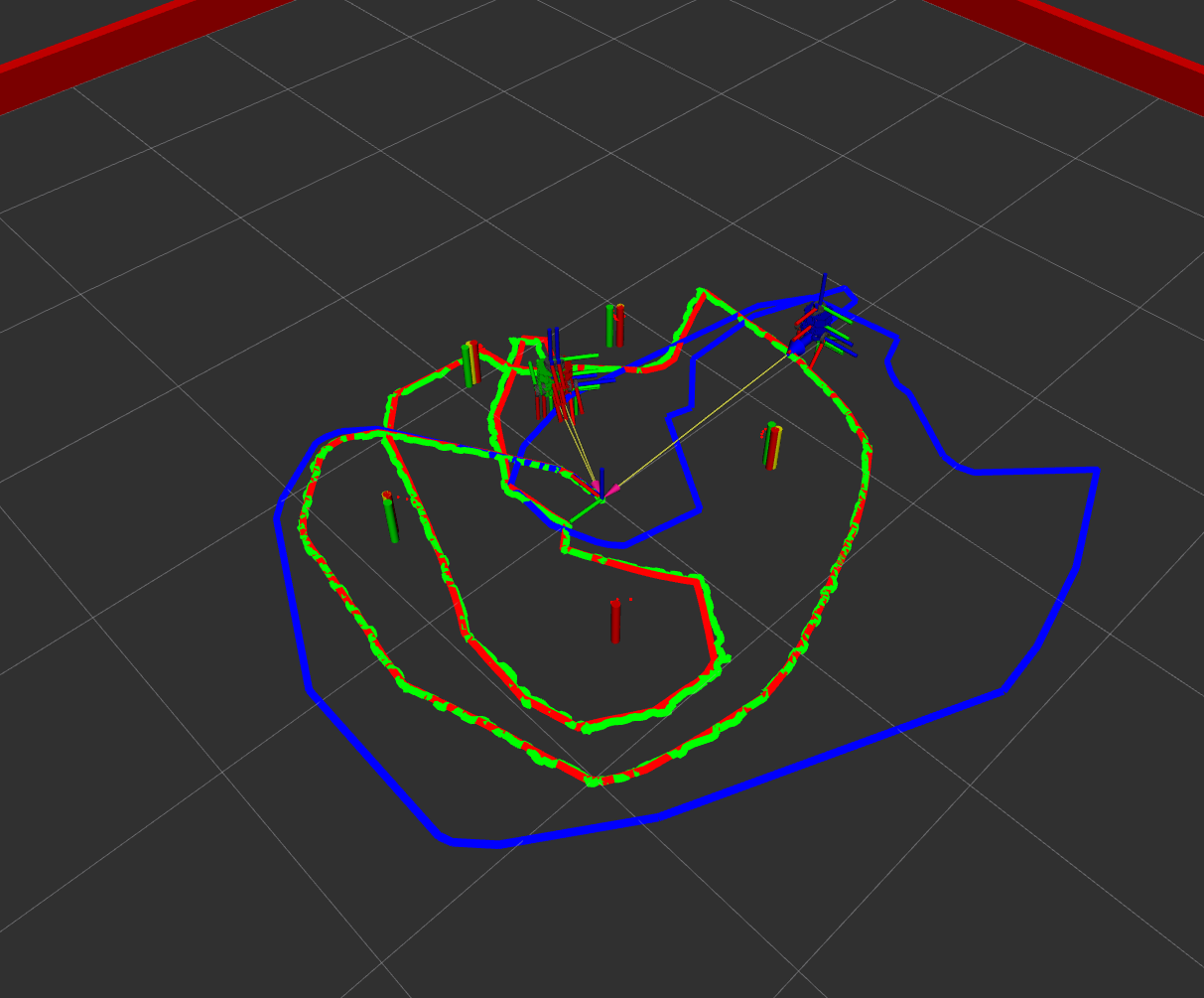

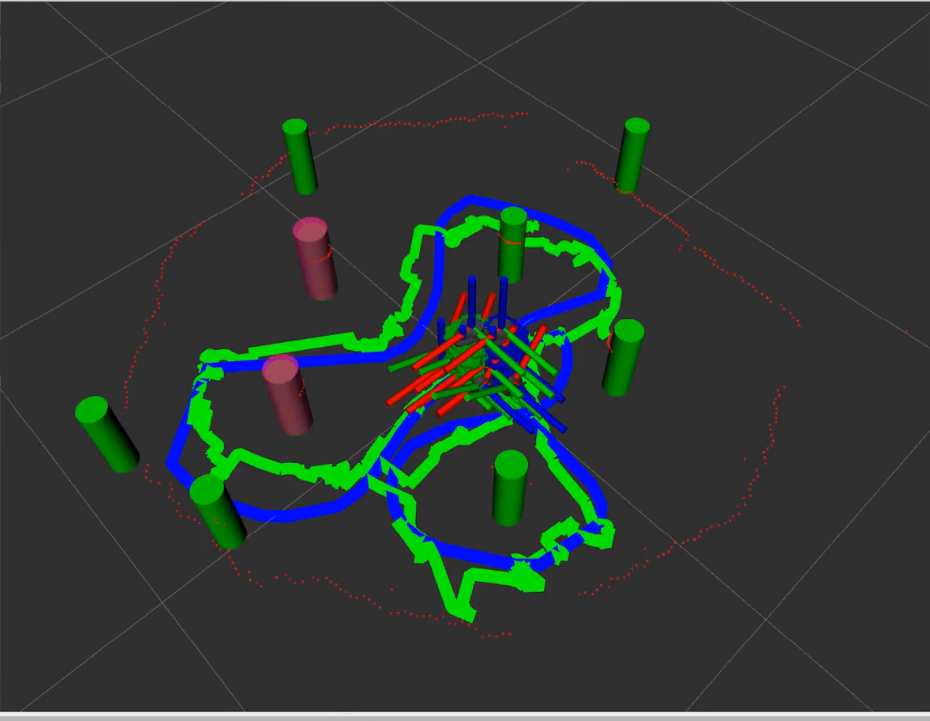

All three robot representations — ground truth (red), odometry (blue), and SLAM (green) — are visualized simultaneously in RViz. The tables below summarize final position error after a closed-loop traversal of the environment.

Simulation

| Estimate | x (m) | y (m) | θ (°) | Distance from Truth |

|---|---|---|---|---|

| Ground truth (red) | 0.0725 | −0.0153 | −2.469 | — |

| Odometry (blue) | 0.2698 | 0.4571 | −2.813 | 0.512 m |

| SLAM (green) | 0.0723 | −0.0149 | −2.435 | 0.0005 m (< 1 mm) |

Hardware

| Estimate | x (m) | y (m) | θ (°) | Distance from Start |

|---|---|---|---|---|

| Odometry (blue) | −0.0225 | 0.0613 | −0.555 | 0.065 m |

| SLAM (green) | 0.0264 | −0.0016 | −1.822 | 0.026 m (3× improvement) |

System Architecture

The project is organized as a set of ROS 2 packages and C++ libraries that each handle a distinct stage of the SLAM pipeline:

- nusim — Simulates the robot, environment, and sensors, providing ground truth for evaluation.

- nuturtle_control — Translates velocity commands into wheel motion and integrates encoder data into an odometry estimate.

- detectlib — Processes raw LiDAR scans to detect, classify, and localize cylindrical landmarks.

- ekflib / nuslam — Fuses odometry and landmark measurements via an EKF to jointly estimate robot pose and a growing landmark map.

Simulation — nusim

- Provides a fully configurable simulator for the Turtlebot3, including a world of obstacles and walls.

- Models realistic wheel slip and input noise so behavior closely matches the physical robot.

- Publishes a simulated 2D LiDAR scan and maintains a ground truth pose for quantitative evaluation.

Robot Control & Odometry — nuturtle_control

- The

turtle_controlnode convertscmd_velmessages into wheel commands for both the simulator and the physical robot. - The

odometrynode integrates encoder readings using differential-drive kinematics to produce a continuous pose estimate — the baseline that SLAM is measured against. - Supports seamless switching between simulation and hardware via launch arguments, with no changes to the SLAM stack required.

LiDAR Landmark Detection — detectlib

Raw LiDAR scans are processed in three stages before being handed off to the EKF:

- Clustering: Consecutive LiDAR returns within a distance threshold are grouped into clusters, each a candidate obstacle.

- Classification: A

CircularClassifieruses the inscribed angle theorem — points on a circle subtend a constant angle to the chord — to distinguish circular obstacles from linear features such as walls. - Regression: A

CircularRegressorfits a circle to the cluster of points, outting the radius and center of the potential obstacle.

EKF State Estimation — ekflib / nuslam

- The state vector jointly tracks robot pose and all observed landmark positions, so every landmark correction also improves the pose estimate.

- On each odometry update, the filter propagates state through a nonlinear motion model and inflates uncertainty accordingly.

- When a landmark measurement arrives, a Kalman gain is computed and both the pose estimate is corrected simultaneously.

- Implements unknown data association via Mahalanobis distance: each measurement is matched to the nearest known landmark if within a tunable threshold, otherwise it seeds a new map entry — allowing the map to grow incrementally without pre-labeled observations.

Reflections

Implementing all these algorithms was difficult, however, getting them working on hardware was even more so. Even when starting with parameters tuned in simulation, the 2D LiDAR sensor on the turtlebot3 was noisier and lead to more unpredictable behavior from the object detection algorithm. In this process, I found out how critical the data association step is. If the algorithm mis-identified an obstacle it would send the SLAM estimate across the map. To combat these issues I needed to add much higher thresholds to my obstacle filtering, which in the end was able to get good enough performance.

In the results video you can see how infrequently the algorithm identifies a new obstacle (a pink obstacle pops up) because of these strict filtering parameters. Even so, the algorithm mistakes the walls at times for obstacles. Given the time constraints I was not able to tune my algorithm further, however, these extra obstacles should not affect the performance of the algorithm since they are initialized with extremely high uncertaintly.

I learned so much while working on this project, after starting with very little 'true' c++ experience. Looking forward I am excited to work on more challenges like these in the field of robotics!